Edge AI is rapidly becoming a foundational element of modern digital systems, enabling data processing directly on devices rather than relying on centralized cloud infrastructure. This shift is driven by the need for lower latency, improved data privacy, and real-time decision-making in industries such as robotics, surveillance, and industrial automation. In this context, platforms like NVIDIA Jetson Orin Nano are transforming how AI workloads are deployed at the edge.

The NVIDIA Jetson ecosystem provides a scalable environment for embedded AI development, combining optimized hardware with a mature software stack. Solutions like NVIDIA Jetson Orin are designed to support everything from prototyping to production deployment. Developers benefit from pre-integrated tools and frameworks that accelerate development and simplify integration into existing systems.

What is the Jetson Orin Nano? Understanding this concept is essential for organizations exploring edge AI strategies. The Jetson Nano Orin generation introduces significant improvements in performance and efficiency, enabling advanced inference directly on compact devices. As a result, the Orion nano platform is increasingly used for applications requiring real-time analytics, computer vision, and autonomous decision-making at the edge.

What Is The NVIDIA Jetson Orin Nano Super Developer Kit?

The NVIDIA Jetson Orin Nano Developer Kit is a compact yet powerful development platform designed for building and deploying AI-powered applications at the edge. It serves as an entry point into the Jetson Orin family, offering substantial computational performance in a small form factor. The Orin Nano platform is specifically optimized for edge inference workloads, making it a practical solution for applications that require on-device processing rather than relying on cloud infrastructure.

From a positioning standpoint, the Jetson Orin platform sits between lightweight prototyping hardware and enterprise-grade AI systems. It allows teams to develop production-ready solutions without investing in expensive infrastructure. For many organizations, this balance between performance and accessibility is critical, especially when scaling from proof-of-concept to real-world deployment.

The target audience for the NVIDIA Jetson Orin Nano Developer Kit is diverse. It includes AI developers building machine learning models, startups creating smart devices, and enterprises integrating AI into existing systems. Robotics engineers, IoT solution architects, and system integrators also benefit from the flexibility and performance offered by the platform. The ability to run multiple neural networks concurrently makes it particularly attractive for complex applications such as multi-camera video analytics and autonomous navigation.

One of the key benefits of the platform is its ability to accelerate development cycles. By providing a pre-configured environment with optimized libraries, developers can focus on model development and deployment rather than infrastructure setup. This reduces engineering overhead and minimizes integration risks.

Another important advantage is scalability. Solutions developed on the Orin Nano can be easily migrated to more powerful Jetson modules if needed, ensuring long-term flexibility. This makes it a strategic choice for organizations planning to expand their AI capabilities over time.

Key Specifications and Hardware Overview

The NVIDIA Jetson Orin Nano combines a compact form factor with powerful hardware designed for edge AI workloads. At its core, the platform features a multi-core CPU for general processing and an NVIDIA Ampere-based GPU optimized for parallel computation and neural network inference. This architecture allows Jetson Orin Nano to efficiently handle both traditional tasks and AI-driven operations.

Memory and storage are configured to support real-time data processing and multi-tasking. With high-bandwidth RAM and flexible storage options, the system can run multiple models simultaneously while maintaining stable performance. The NVIDIA Orin Nano delivers strong AI performance measured in TOPS, enabling advanced inference workloads across applications such as computer vision and robotics.

Power efficiency is a key advantage of the Jetson Nano Orin platform. Operating within a low-power envelope, it is suitable for embedded systems, mobile devices, and remote deployments. In terms of connectivity, Jetson Orin supports multiple interfaces, including USB, camera inputs, and networking options, ensuring seamless integration with sensors and external devices.

Overall, Jetson Orin Nano capabilities provide a balanced combination of performance, efficiency, and flexibility for modern edge AI systems.

Software Ecosystem and Development Tools

The effectiveness of NVIDIA Jetson Orin Nano is strongly tied to its software ecosystem, which simplifies the development, optimization, and deployment of AI applications at the edge. The platform is powered by the JetPack SDK, a comprehensive environment that includes drivers, libraries, and tools required to build production-ready solutions. This ecosystem allows developers to focus on model performance and integration rather than low-level system configuration.

Support for modern AI frameworks and optimized libraries ensures that Jetson Orin Nano capabilities can be fully utilized in real-world scenarios. Developers can efficiently run neural network models for inference, optimize performance, and deploy applications across various devices. The integration of GPU acceleration and specialized tools reduces latency and improves overall system efficiency.

Key components of the ecosystem include:

- JetPack SDK – provides a complete development environment for NVIDIA Jetson Orin and embedded AI systems.

- CUDA – enables parallel processing on the GPU for high-performance computing and model acceleration.

- TensorRT – optimizes neural network inference for faster processing and lower latency.

- DeepStream – supports real-time video analytics and multi-stream processing at the edge.

- AI frameworks (TensorFlow, PyTorch) – allow seamless model development, training, and deployment.

This ecosystem makes orin nano a practical choice for scalable AI solutions, reducing development complexity while ensuring high performance in edge environments.

Jetson Orin Nano Capabilities

The NVIDIA Jetson Orin Nano is designed to deliver high-performance edge AI processing, enabling devices to run neural network models locally without relying on cloud infrastructure. This reduces latency, improves data privacy, and ensures real-time responsiveness in critical applications. The Jetson Orin Nano capabilities make it suitable for scenarios where immediate decision-making is required, such as robotics, surveillance, and industrial systems.

One of its core strengths is real-time inference, allowing the system to process data streams and generate insights instantly. This is essential for applications like video analytics, where delays can impact performance and safety. The platform also excels in computer vision, supporting tasks such as object detection, tracking, and image analysis across multiple inputs.

In robotics and automation, Jetson Nano Orin enables autonomous systems to interpret sensor data and respond dynamically to their environment. It also supports multi-stream video processing, making it effective for deployments involving multiple cameras or data sources. Overall, NVIDIA Jetson Orin Nano provides a balanced combination of performance, efficiency, and scalability for embedded AI systems operating at the edge.

Advantages and Limitations

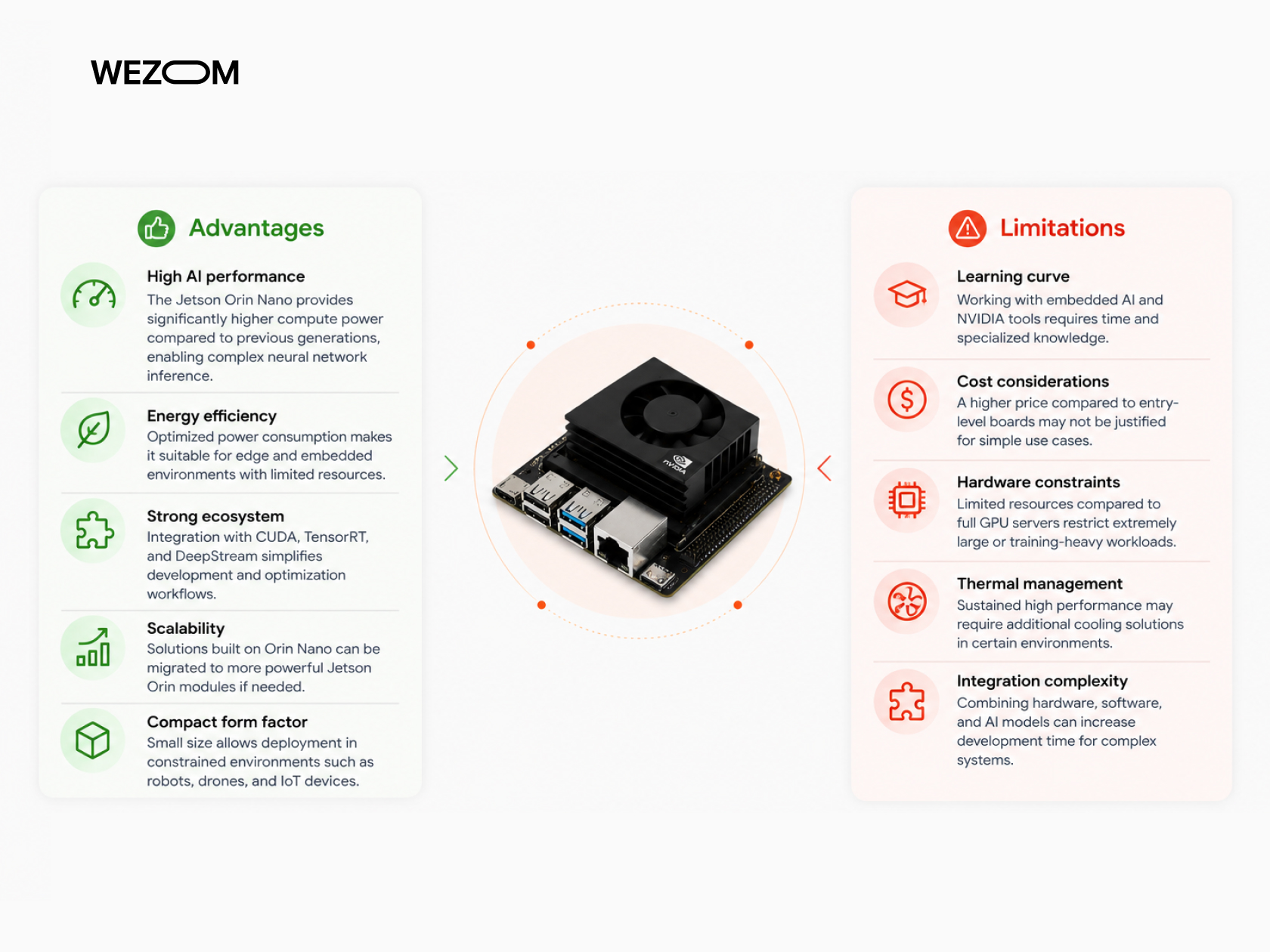

When evaluating NVIDIA Jetson Orin Nano, it is important to consider both its strengths and potential constraints in real-world implementations. While the platform delivers strong performance for edge AI, its effectiveness depends on project requirements, team expertise, and deployment conditions.

Advantages

- High AI performance – the Jetson Orin Nano provides significantly higher compute power compared to previous generations, enabling complex neural network inference.

- Energy efficiency – optimized power consumption makes it suitable for edge and embedded environments with limited resources.

- Strong ecosystem – integration with CUDA, TensorRT, and DeepStream simplifies development and optimization workflows.

- Scalability – solutions built on Orin Nano can be migrated to more powerful Jetson Orin modules if needed.

- Compact form factor – small size allows deployment in constrained environments such as robots, drones, and IoT devices.

Limitations

- Learning curve – working with embedded AI and NVIDIA tools requires time and specialized knowledge.

- Cost considerations – a higher price compared to entry-level boards may not be justified for simple use cases.

- Hardware constraints – limited resources compared to full GPU servers restrict extremely large or training-heavy workloads.

- Thermal management – sustained high performance may require additional cooling solutions in certain environments.

- Integration complexity – combining hardware, software, and AI models can increase development time for complex systems.

Real-World Use Cases of Jetson Orin Nano

The NVIDIA Jetson Orin Nano is widely used in industries where real-time processing, low latency, and edge inference are critical. Its ability to run neural network models locally makes it effective for scalable and autonomous systems without relying on cloud infrastructure. Below are key areas where Jetson Nano Orin delivers value.

Computer Vision and Smart Surveillance

The NVIDIA Jetson Orin Nano enables real-time video analytics with object detection, tracking, and anomaly detection. It processes multiple streams locally, improving response time and data security for surveillance systems.

Smart Retail and Customer Analytics

Retailers use Jetson Orin Nano to analyze customer behavior, generate heatmaps, and manage queues. This allows real-time insights and personalized experiences without cloud dependency.

Industrial Automation and Manufacturing

In manufacturing, NVIDIA Jetson Orin Nano supports quality inspection and predictive maintenance by analyzing sensor and visual data locally, reducing downtime and improving efficiency.

Robotics and Autonomous Systems

Robotics systems use Jetson Nano Orin for navigation, obstacle avoidance, and real-time decision-making based on sensor input, enabling autonomous operations.

Drones and Aerial Intelligence

Drones equipped with NVIDIA Jetson Orin Nano perform onboard video processing, object tracking, and mapping, reducing latency and enabling real-time analytics in dynamic environments.

Healthcare and Medical Devices

The platform supports medical imaging analysis and patient monitoring with local inference, ensuring low latency and compliance with data privacy requirements.

Smart Cities and Transportation

Jetson Orin enables traffic monitoring, smart parking, and public safety analytics by processing data locally and supporting scalable urban infrastructure.

Agriculture and Environmental Monitoring

Farmers use Jetson Nano Orin for crop monitoring, pest detection, and smart irrigation by analyzing environmental and visual data in real time.

Edge AI in IoT and Connected Devices

In IoT systems, NVIDIA Jetson Orin Nano powers smart cameras and sensors, enabling real-time analytics and automation directly on connected devices.

How Businesses Can Leverage Embedded AI Systems

Adopting platforms like NVIDIA Jetson Orin Nano allows businesses to shift toward real-time, data-driven operations at the edge. Instead of relying on cloud processing, organizations can deploy AI directly on devices, reducing latency and improving responsiveness in critical workflows.

One of the main benefits is process automation. Using Jetson Orin Nano, companies can automate tasks such as quality inspection, monitoring, and data analysis, reducing manual effort and increasing consistency. This is especially valuable in manufacturing, logistics, and retail environments.

Cost optimization is another key advantage. While initial investment in NVIDIA Jetson Orin may be required, businesses can lower long-term expenses by reducing cloud usage, minimizing downtime, and improving operational efficiency. Local processing also reduces bandwidth costs and dependency on external infrastructure.

Real-time decision-making becomes possible with embedded AI. Systems can instantly analyze data and respond to events, which is critical for applications like security, healthcare, and industrial automation. The Jetson Nano Orin platform ensures that insights are generated without delays.

Finally, scalability provides long-term value. Solutions built on Orin Nano can be expanded across devices or upgraded within the Jetson Orin ecosystem, allowing businesses to grow their AI capabilities while maintaining performance and integration consistency.

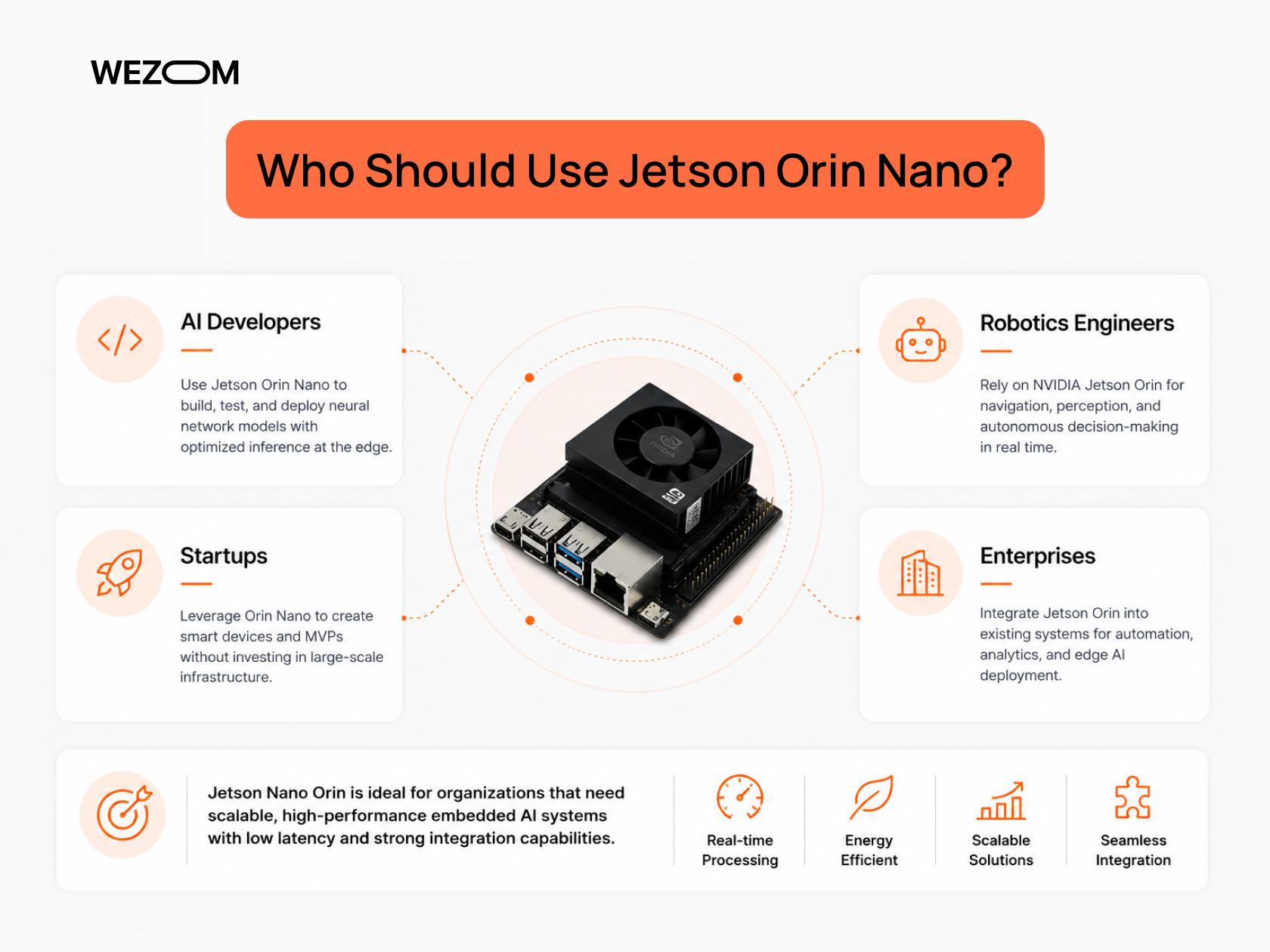

Who Should Use Jetson Orin Nano?

The NVIDIA Jetson Orin Nano Developer Kit is best suited for teams building AI-powered edge solutions that require real-time processing and efficient deployment. Its balance of performance and energy efficiency makes it practical for both prototyping and production environments.

- AI developers – use Jetson Orin Nano to build, test, and deploy neural network models with optimized inference at the edge.

- Robotics engineers – rely on NVIDIA Jetson Orin for navigation, perception, and autonomous decision-making in real time.

- Startups – leverage Orin Nano to create smart devices and MVPs without investing in large-scale infrastructure.

- Enterprises – integrate Jetson Orin into existing systems for automation, analytics, and edge AI deployment.

Overall, Jetson Nano Orin is ideal for organizations that need scalable, high-performance embedded AI systems with low latency and strong integration capabilities.

Wezom's Practical Experience and Applications

From a practical perspective, implementing embedded AI systems requires more than just selecting the right hardware. It involves designing architectures that can handle real-time data processing, integrating multiple components, and ensuring reliability in production environments. WEZOM’s experience in building AI-driven systems highlights the importance of a holistic approach to development.

One of the key areas of expertise is building production-ready AI systems. This involves not only developing models but also optimizing them for edge deployment. Techniques such as model compression, quantization, and hardware acceleration are essential for achieving the desired performance on devices like NVIDIA Orin Nano. Without these optimizations, even powerful hardware may fail to deliver expected results.

Integration with hardware and cloud systems is another critical aspect. Edge devices often operate as part of a larger ecosystem that includes cloud platforms, data pipelines, and user interfaces. Ensuring seamless communication between these components is essential for system efficiency. WEZOM focuses on creating architectures that balance edge and cloud processing, leveraging the strengths of both approaches.

Working with real-time data pipelines requires careful design and testing. Systems must be able to handle continuous data streams without interruptions. This involves implementing robust data processing frameworks, monitoring tools, and failover mechanisms. The goal is to ensure that applications remain reliable even under high high-load conditions.

Hardware for drone and AI-related use cases represents a specialized area of development. Drones, for example, require lightweight and efficient systems that can process data in real time. By leveraging platforms like Jetson Orin Nano, WEZOM has worked on solutions that enable advanced capabilities such as object tracking, mapping, and autonomous navigation. These applications demonstrate the versatility and scalability of embedded AI systems.

Things to Consider Before Buying

Before choosing NVIDIA Jetson Orin Nano, it is important to align the hardware capabilities with your project requirements and constraints. Despite strong Jetson Orin Nano capabilities, factors such as power limits, performance needs, and integration complexity can directly impact deployment success. For example, the device operates within a relatively low power range (around 7W–25W), which is efficient but may require careful optimization for demanding workloads.

- Budget – while Origin Nano offers high performance, the total cost includes development, integration, and scaling.

- Power requirements – limited power envelope improves efficiency but may constrain peak performance in intensive AI scenarios.

- Project complexity – advanced neural network and real-time systems require experienced teams and optimization efforts.

- Hardware limitations – compact design (e.g., 6-core CPU, Ampere GPU, 8GB memory) may restrict large-scale model deployment.

- Ecosystem compatibility – ensure frameworks, CUDA-based tools, and existing infrastructure align with NVIDIA Jetson Orin.

FAQ

What is NVIDIA Jetson Orin Nano used for?

The NVIDIA Jetson Orin Nano is used for deploying AI applications at the edge, where data is processed directly on the device rather than in the cloud. Common use cases include computer vision, robotics, industrial automation, and smart IoT systems. It is particularly valuable in scenarios requiring real-time inference, low latency, and data privacy. Businesses use it to build intelligent systems that can operate independently and respond instantly to changing conditions.

What is the difference between Jetson Nano and Jetson Orin Nano?

The primary difference lies in performance and architecture. The Jetson Orin Nano is based on a newer GPU architecture and offers significantly higher AI performance compared to the original Jetson Nano. It supports more complex models, higher-resolution data processing, and multiple concurrent workloads. Additionally, it includes improved power efficiency and better support for modern AI frameworks, making it more suitable for production-level applications.

What are the main specifications of Jetson Orin Nano?

The platform includes a multi-core CPU, an NVIDIA Ampere-based GPU, high-bandwidth memory, and support for various interfaces such as USB and camera inputs. It delivers substantial AI performance measured in TOPS, enabling it to run multiple neural networks simultaneously. The system is designed for energy efficiency and compact deployment, making it suitable for embedded AI applications across industries.

How powerful is NVIDIA Jetson Orin Nano?

The NVIDIA Orin Nano offers a significant increase in computational power compared to earlier Jetson devices. It can handle complex AI models, real-time video processing, and multi-stream analytics. While it is not a replacement for high-end GPU servers, it provides an optimal balance between performance and efficiency for edge deployments. Its capabilities make it suitable for demanding applications such as robotics, surveillance, and industrial automation.

What is the price of the Jetson Orin Nano Developer Kit?

The price of the NVIDIA Jetson Orin Nano developer kit varies depending on the configuration and market conditions, but it is generally positioned as an affordable entry point into the Jetson Orin ecosystem. Organizations should consider not only the hardware cost but also expenses related to development, integration, and maintenance. Evaluating the total cost of ownership helps ensure that the investment aligns with business objectives and delivers long-term value.